Executive Summary

The 2025 fraud landscape confirmed a pivotal shift: identity fraud is no longer primarily a financial nuisance committed by isolated actors. It has industrialized into a scalable, transnational enterprise.

Generative AI accelerates this shift by reducing the cost of producing convincing deception and compressing the attacker learning cycle. Failed attempts can be iterated into more effective ones quickly, then reproduced across many targets and workflows. The result is a rapid increase in the volume, realism, and operational polish of fraud attempts during sustained attacks. Synthetic identity creation becomes automated, and social engineering attacks can be scaled to target more victims with increased efficiency.

Capability Shift: Generative AI Makes Fraud Scalable

Generative AI does not create new fraud objectives. It removes bottlenecks that historically kept fraud smaller: the time to produce believable artifacts, the skill needed to write persuasive narratives, and the technical effort required to deploy infrastructure.

In 2026, organizations should assume that content quality is no longer a reliable signal of legitimacy. Defensive approaches that lean on visual realism will continue to degrade as adversaries industrialize high-quality fakes.

LLMs and the Power of Scale

The integration of Large Language Models (LLMs) has fundamentally altered the threat landscape. Unlike the rigid, manual scripts of the past, LLMs allow threat actors to generate a new level of perceived authenticity at an unprecedented scale.

- Adaptive Conversational Flow: LLMs enable attackers to engage in dynamic, multi-turn conversations that adapt to a victim’s responses in real-time, moving far beyond static templates.

- Elimination of Linguistic Signals: Traditional detection signals, such as poor grammar, spelling errors, or awkward phrasing, are largely eliminated as LLMs produce perfectly polished, professional, and contextually appropriate content.

- Hyper-Scalability: LLMs drive automated personalization at scale. A single actor can generate thousands of unique, contextually relevant messages in seconds, making reused scripts and the detection thereof a thing of the past.

Generative-AI Forgery

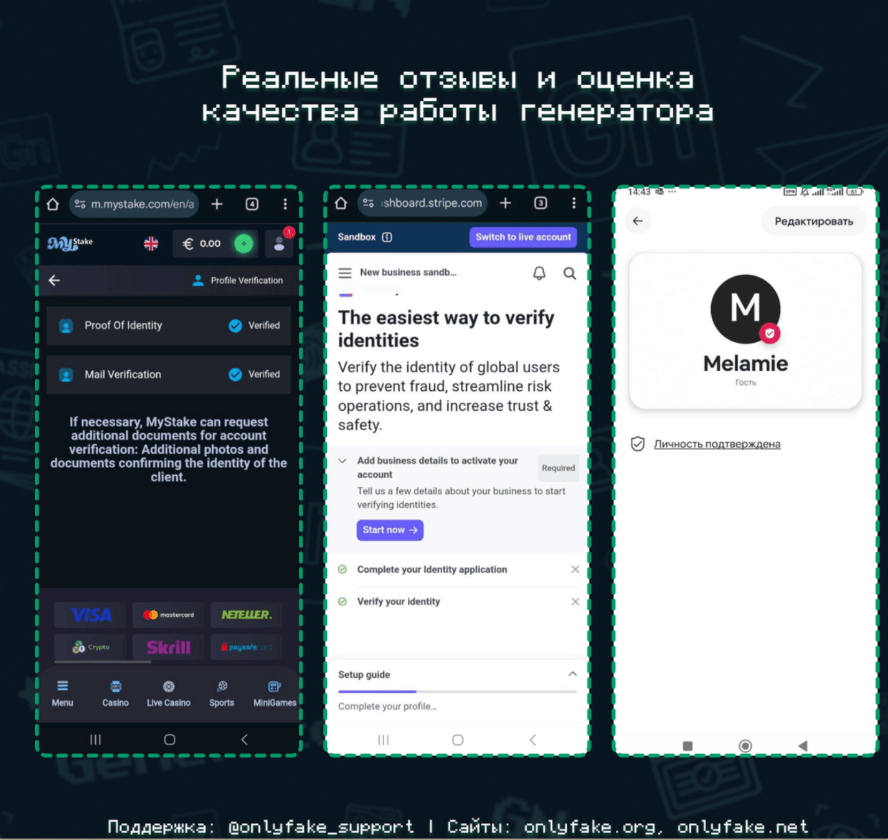

Threat actors are no longer just producing visual fakes; they are creating data packages designed to bypass sophisticated verification requirements. AI-enabled tools, such as OnlyFake, allow for the creation of high-fidelity government identification in seconds. Recent intelligence from Russian-speaking fraud communities indicates that these services are specifically optimized to bypass automated Know Your Customer (KYC) and identity verification controls.

While threat actors often exaggerate these claims, they demonstrate how generative AI is being utilized to scale synthetic identity fraud and reduce barriers for non-technical actors.

The Rise of Deepfakes

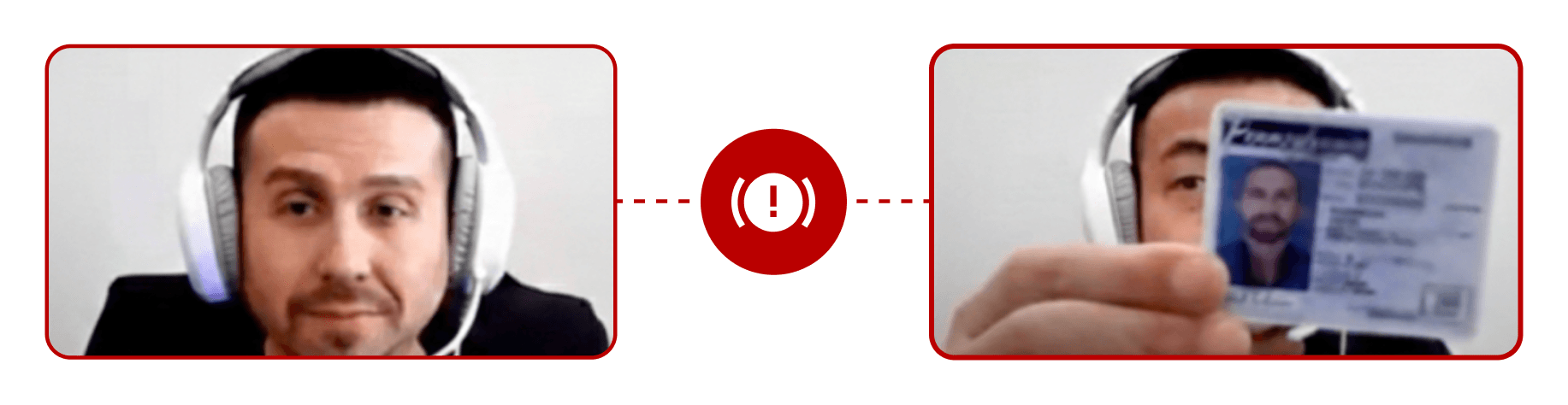

Identity fraud is increasingly shaped by threat actors who utilize deepfake technology to mimic liveness. These threat actors have evolved beyond holding up high-resolution photos or masks to a camera; they now target the video pipeline itself through injection-based attacks. Threat actors deploy virtual camera software that allows them to feed hyper-realistic deepfakes directly into an authentication system’s video feed. This infrastructure allows a deepfake feed to be injected during a liveness check, often paired with a fraudulent ID that features a matching synthetic image.

In order to carry out these attacks in real time, threat actors are now using AI tools to conduct face-swap overlays during live video calls. These overlays include natural movement, such as blinking and shifting expressions, which can appear perfectly normal to a human reviewer. The speed and quality of AI-generated movement is beginning to outpace human detection.

ID.me first witnessed deepfake-enabled fraud attempts in 2024. These deepfakes were largely unsophisticated and relied on freely available animation tools applied to static images of identity documents. Human reviewers were typically able to identify anomalies quickly and stop attacks before account compromise. Since 2024, real-time deepfake capabilities have advanced rapidly. Limitations observed in early attempts, such as those featured in a 60 Minutes investigative segment, have been significantly reduced in newer implementations. Today’s deepfake attacks increasingly maintain visual continuity during live video sessions or bypass the camera entirely through direct video-stream injection.

AI-enabled Threat Evolution

This capability shift has materially lowered the barriers for threat actors to scale more effective fraud schemes. Synthetic identities are easier to produce, social engineering attempts are more convincing, and fraudsters can generate higher-quality artifacts to deceive customer service agents and downstream workflows.

Synthetic Identities

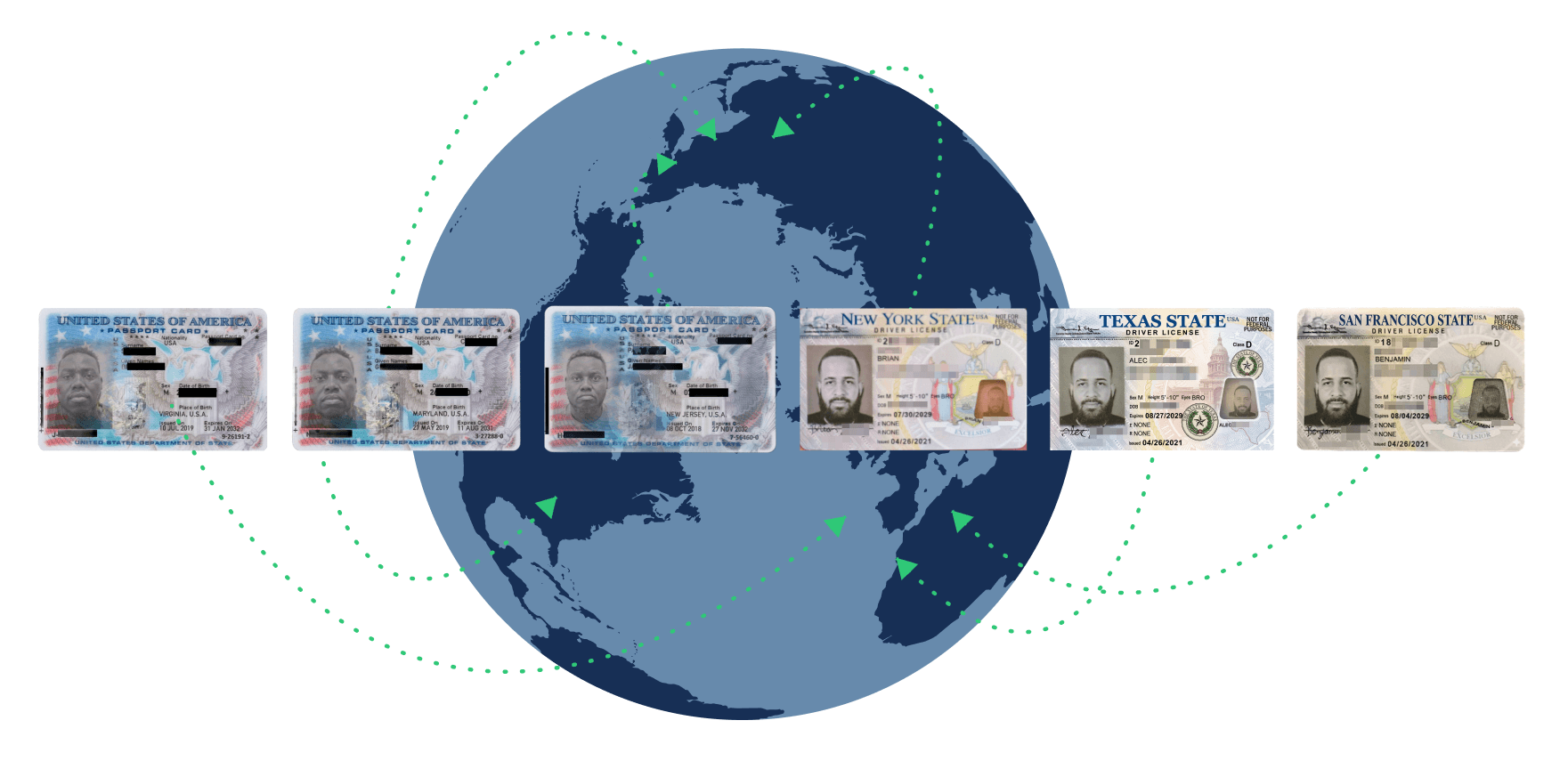

The true danger of the current landscape is not just the quality of individual fakes, but the seamless integration of forged documents and biometric bypasses. Threat actors are now moving beyond isolated artifacts to create comprehensive identity packages that satisfy every layer of the verification funnel. By pairing a high-fidelity government ID with a deepfake video feed that matches the license photo, attackers can simulate a consistent digital persona.

This convergence effectively neutralizes traditional, static defense-in-depth strategies. ID.me addresses this risk through a multi-layered detection engine that moves beyond simple visual inspection.

AI-Driven Social Engineering

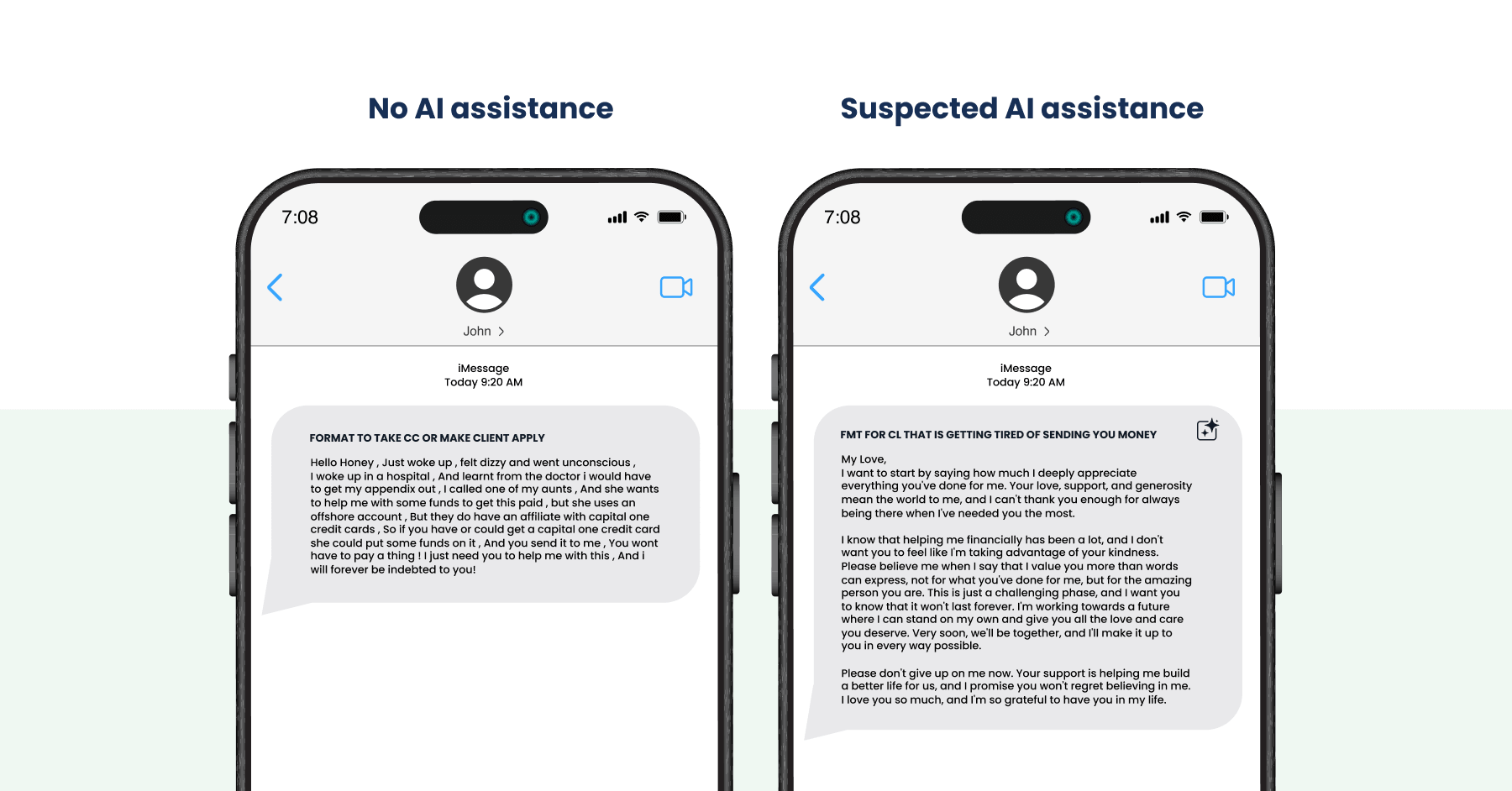

Before the widespread use of generative AI, social engineering campaigns relied heavily on manually written scripts and reusable “formats,” or message templates, shared across fraud communities. These scripts were frequently copied, lightly modified, and redistributed, resulting in messages that followed predictable narratives and exhibited consistent linguistic and structural flaws. While effective in some cases, these limitations increased detection risk and reduced success rates, particularly when targets or customer service representatives scrutinised message quality.

The Erosion of Traditional Detection Signals

The adoption of AI-assisted social engineering has fundamentally changed how threat actors interact with victims through online messaging platforms. Threat actors can now generate and refine messages rapidly, test variations at scale, and adapt tone or content in response to victim engagement. This shift enables higher rates of contact and sustained interaction with victims, producing campaigns that more closely resemble legitimate communications and increasing both the volume and effectiveness of social engineering attempts.

Using AI to Target Customer Service Workflows

The move toward AI-driven communication has altered the threat landscape by removing many of the constraints that historically limited the scalability of social engineering campaigns targeting customer service workflows. Unlike communications directed at victims, where attackers rely on long-term engagement tactics such as romance and trust-building to maintain interest, interactions with customer service representatives are transactional and procedural in nature.

AI enables automated personalisation at scale, allowing a single actor to generate thousands of unique, contextually appropriate customer service requests in seconds. Variations tailored to specific workflows or verification requirements can be produced instantly, making reused scripts difficult to detect and transforming social engineering against customer service operations from a manual, low-volume activity into a high-throughput process with minimal tradeoffs in message quality.

ID.me has observed this in the wild. Threat actors have recently been observed contacting ID.me support to request reinstatement of suspended wallets. AI tools can be used to generate numerous variations of this communication for threat actors to use, increasing the difficulty of detecting fraudulent activity.

Employment Fraud is on the Rise

The fraud landscape has expanded beyond financial crime and consumer identity theft to encompass a more consequential attack surface: the U.S. employment system itself. Nation-state actors, principally the Democratic People’s Republic of Korea (DPRK), have identified the structural absence of persistent, verified identity across the hiring and onboarding lifecycle as a strategic vulnerability, and are exploiting it systematically.

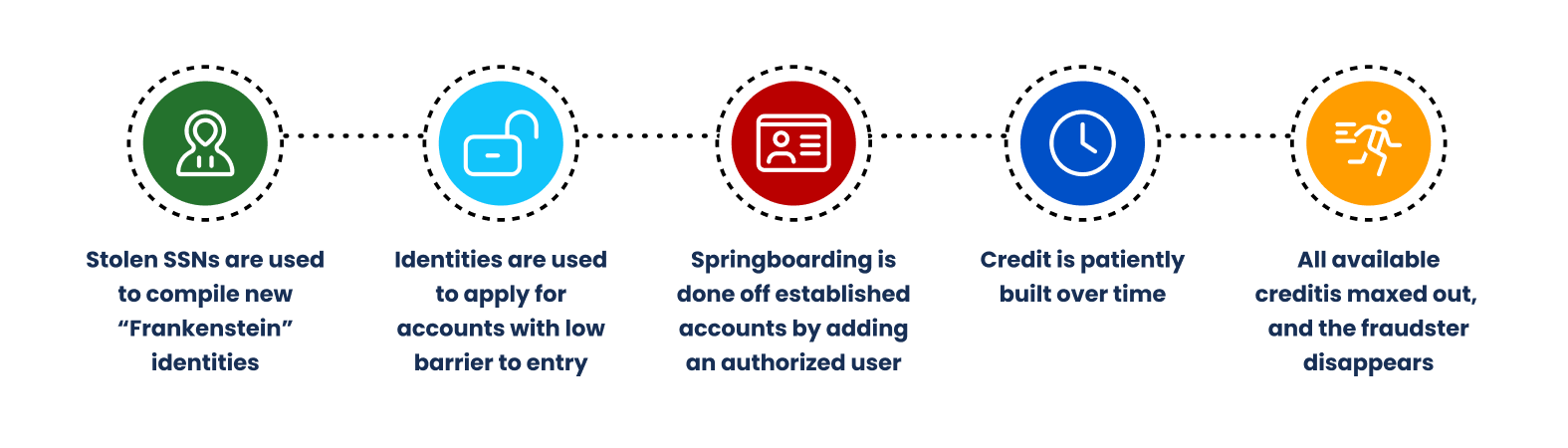

The U.S. hiring process contains no continuous identity layer. Each step inherits identity from the step before it without independent verification, creating an “inherited identity” problem. The result is an entire access architecture built on an unverified chain of assumptions.

The DPRK has industrialized the exploitation of this gap. The FBI has confirmed that more than 300 U.S. companies, including Fortune 500 firms, have unknowingly hired DPRK operatives. The United Nations estimates the scheme generates between $250 million and $600 million annually for the North Korean regime, with funds directly supporting its nuclear weapons and ballistic missile programs. Operatives acquire stolen identities, construct fabricated professional personas, and apply for remote positions in software engineering, full-stack development, and AI/ML roles.

Generative AI has dramatically accelerated this threat. Palo Alto Networks’ Unit 42 demonstrated that a single researcher with no image manipulation experience could create a convincing synthetic identity suitable for video interviews in 70 minutes using a consumer-grade computer. When technical controls prove difficult to defeat, DPRK operatives have also recruited U.S. citizens as human proxies, individuals who appear on camera for interviews and identity verification while the operative performs the actual work remotely.

The threat has escalated beyond revenue generation. In January 2025, the FBI warned that DPRK IT workers are now conducting data extortion, exfiltrating proprietary source code, and deploying malware from within the organizations that hired them. ID.me is actively detecting and stopping these attacks. As of February 2026, ID.me’s fraud operations team has suspended more than 130 wallets directly linked to DPRK threat actors, with wallet creation attempts from DPRK-linked actors increasing 200% between March and November 2025. The implication is direct: the employment system is now an active threat vector, and identity verification cannot be treated as a one-time checkpoint.

What’s Next: Fraud in 2026

The current fraud landscape has confirmed that fraud is no longer driven by isolated threat actors seeking opportunistic gain. Powered by generative AI, it has matured into a scalable, transnational ecosystem that enables low-skilled actors to execute operations once reserved for highly coordinated and well-resourced groups. From the creation of synthetic hybrid identities that bypass legacy KYC checks to the injection of real-time deepfakes into liveness streams, threat actors are rapidly evolving their tactics.

ID.me is positioned as the industry leader with advanced detection tools that match the speed of adversary iteration.

Below are the key fraud trends and ID.me’s predictions for 2026.

| Key Trend | ID.me Prediction for 2026 |

|---|---|

| Synthetic Identity | ID.me expects fraud to shift toward the rapid creation of hyper-realistic, verification-ready identities. Initial submissions may be high-fidelity enough to pass checks on the first attempt. |

| Deepfake Evolution | Deepfake technology will continue to improve until fakes are indistinguishable to the human eye. Organizations must deploy advanced detection tools that match the speed of adversary iteration to protect both supervised and automated verification workflows. |

| Complex Social Engineering Schemes | AI-driven social engineering will erode traditional fraud detection signals as threat actors use generative AI to produce hyper-scalable and linguistically flawless campaigns that closely mimic legitimate communications and adapt to the victim. |

| Attacks Targeting Customer Service Workflows | When adversaries fail to defeat automated controls, they will pivot to the human element. In practice, this means human-led customer service workflows will become an attractive target. This will require the implementation of advanced detection mechanisms, including those powered by AI. |

| Employment Fraud | As DPRK operatives and other sophisticated threat actors continue to exploit gaps in the U.S. hiring lifecycle, ID.me expects a significant increase in synthetic identity packages specifically engineered to pass pre-employment verification workflows. Attacks will grow more sophisticated, combining AI-generated credentials, real-time deepfakes, and domestic human proxies to defeat layered controls. Organizations that treat identity verification as a one-time checkpoint will face the greatest exposure. |